LLM visibility measures how often and how favorably your brand appears in answers generated by AI engines like ChatGPT, Perplexity, Google AI Overviews, and Claude. Unlike traditional SEO rankings, LLM visibility is binary: your brand is either in the AI answer or it isn't, with no position-2 fallback. Because AI search visitors convert at dramatically higher rates than organic search visitors, B2B SaaS companies that ignore LLM visibility are increasingly invisible to their most valuable buyers.

What Is LLM Visibility and How Is It Different from Traditional SEO Visibility?

LLM visibility measures whether your brand appears, and how prominently, inside the actual text of AI-generated answers, not just in a list of blue links on a search results page.

According to Level Agency's AI SEO glossary, LLM visibility tracks four distinct dimensions: mention rate (how often your brand appears across a defined prompt set), mention position (whether you're cited first or as an afterthought), sentiment score (whether the AI describes you positively or neutrally), and AI share of voice (your brand's presence relative to competitors across all relevant AI-generated answers). Search Atlas research from April 2026 makes the distinction concrete for SaaS brands: LLM visibility directly shapes brand discovery, the sentiment embedded in AI recommendations, and share of voice in competitive software categories. None of that appears in Google Search Console.

In traditional SEO, ranking fourth still earns some clicks. In the AI answer layer, if your brand isn't cited, it doesn't exist for that query.

One concept that sharpens this further is Query Fan Outs. When a user types a prompt into ChatGPT or Perplexity, the LLM internally expands it into multiple related sub-queries before generating an answer. A prompt like "best project management tool for B2B SaaS" might fan out into sub-queries about pricing, integrations, user reviews, onboarding complexity, and enterprise security. Your brand must be authoritative across a cluster of related topics to reliably appear in AI answers. One keyword isn't enough.

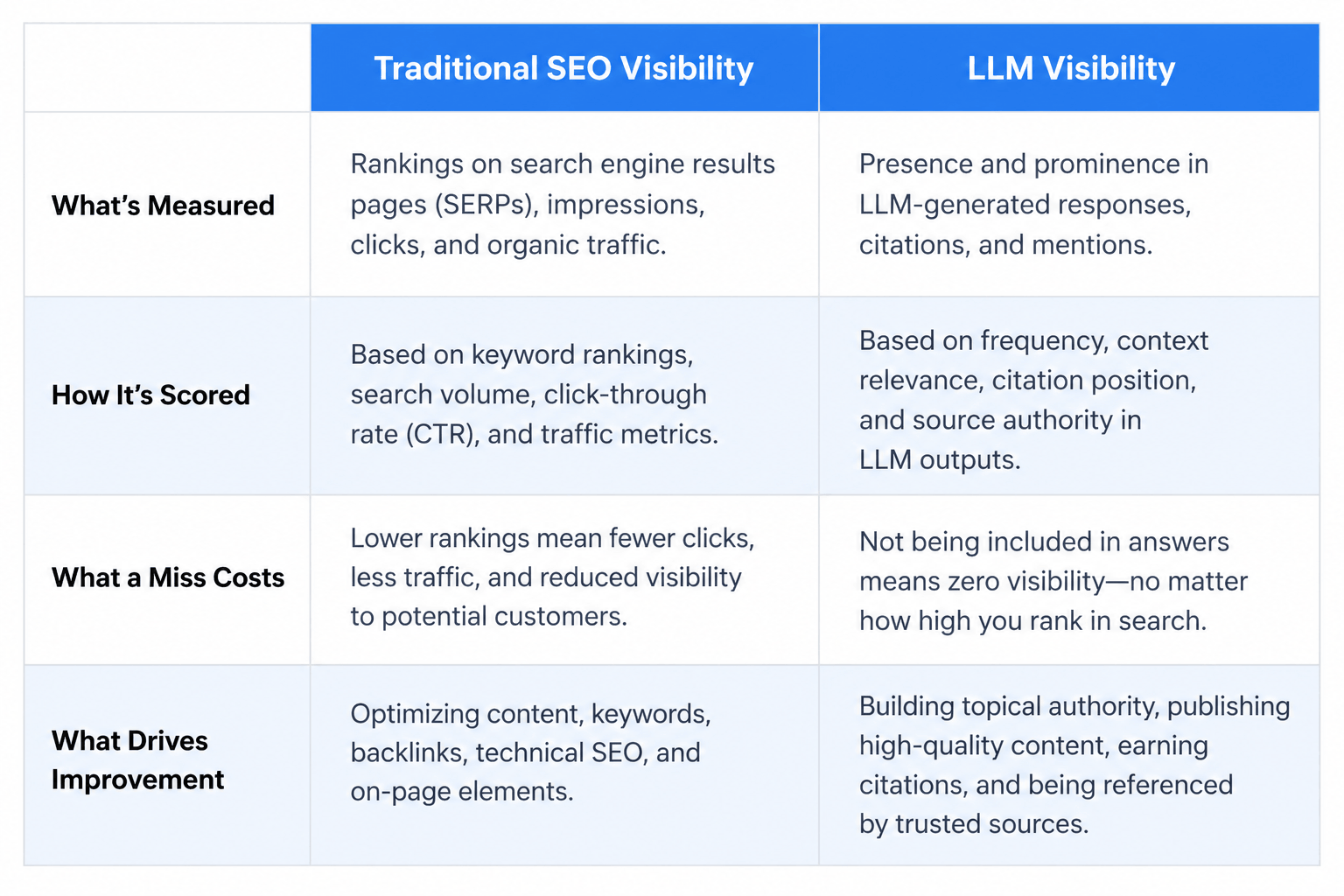

DimensionTraditional SEO VisibilityLLM VisibilityWhat's measuredRanking positions, click-through ratesMention rate, mention position, sentiment score, AI share of voiceHow it's scoredPosition 1–100+ on a continuous scaleBinary: cited or not citedWhat a miss costsReduced clicks; some traffic still arrives at positions 4–10Zero presence for that buyer on that queryWhat drives improvementBacklinks, keyword optimization, page speed, Core Web VitalsStructured schema, llms.txt, named authors, topical authority clusters, third-party platform presence

Why Does LLM Visibility Matter for B2B SaaS Companies Right Now?

B2B buyers now use AI to research, shortlist, and evaluate software before they ever visit a vendor's website. Your LLM visibility score determines whether you make the consideration set.

According to Search Engine Land's AI Visibility Index findings, ChatGPT alone sees 800 million or more weekly active users and processes 2.5 billion or more daily prompts. Buyers in your ICP are asking "What's the best [category] tool for [use case]?" in ChatGPT and Perplexity before they run a single Google search.

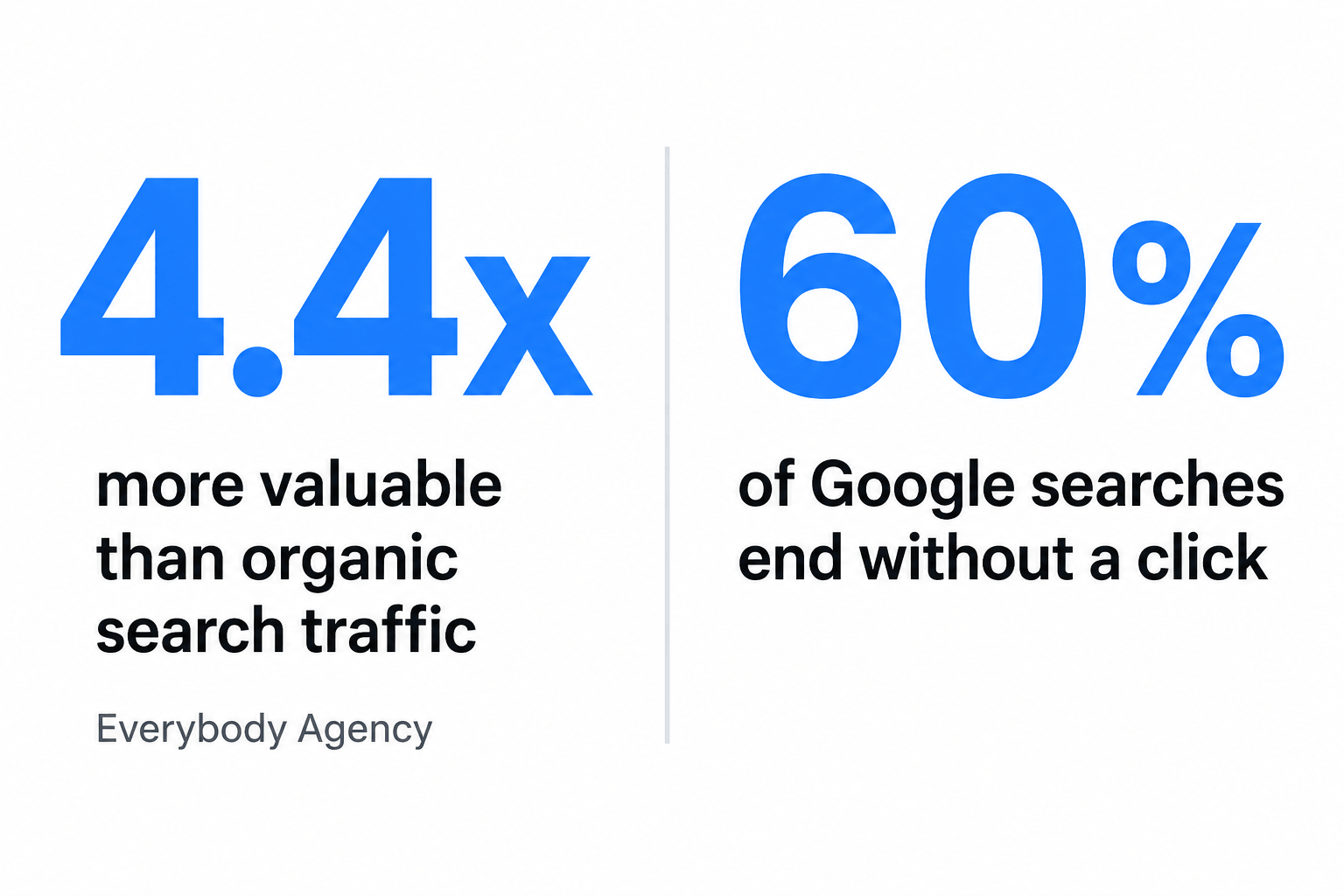

According to Everybody Agency's analysis of the AI answer layer, LLM referrals are worth 4.4x more than organic search traffic. AI search visitors arrive having already received a curated recommendation. Their intent is validated before they reach your site.

"LLM referrals are worth 4.4x more than organic search traffic, because users arrive pre-qualified by an AI recommendation rather than a ranked list." (Everybody Agency)

60% of Google searches already end without a click. AI-generated answers accelerate this by resolving queries before a user reaches a results page. SaaS marketing teams are seeing declining Google traffic alongside stable or rising branded searches, increasing direct traffic, and sales calls where prospects say "I found you through AI." These are signals of LLM-driven discovery bypassing traditional analytics entirely.

Signals your brand is being discovered through LLM but your analytics don't show it:

- Branded direct traffic is rising while organic traffic declines

- Sales discovery calls include unprompted mentions of "I saw you recommended by ChatGPT" or "Perplexity suggested you"

- Pipeline volume is stable despite fewer Google clicks

- Branded search volume is increasing without a corresponding paid campaign

Before optimizing, you need a baseline. Sona AI Visibility runs a free 17-check audit in under 30 seconds and tells B2B marketers whether AI engines can discover, read, and cite their site.

What Metrics Actually Measure LLM Visibility Performance?

LLM visibility performance is measured across four core metrics: AI Share of Voice, Mention Rate, Mention Position, and Sentiment Score. None appear in Google Search Console or traditional SEO dashboards.

Nightwatch.io's LLM visibility measurement framework provides the clearest breakdown:

AI Share of Voice: The percentage of relevant AI-generated answers that include your brand versus competitors. If your brand appears in 30 out of 100 relevant AI answers and your nearest competitor appears in 55, your AI Share of Voice is 30%.

Mention Rate: How often your brand appears when a defined set of target prompts is run across ChatGPT, Perplexity, Claude, and Gemini. A mention rate of 40% means your brand appears in 4 out of every 10 relevant AI answers.

Mention Position: Whether your brand is cited first, in the middle, or as an afterthought. First mentions carry disproportionate weight. AI-generated answers are read sequentially, and buyers anchor on the first recommendation.

Sentiment Score: Whether the AI describes your brand positively, neutrally, or negatively. An AI that says "Brand X is a solid choice for mid-market teams" generates a different outcome than one that says "Brand X has mixed reviews for enterprise use cases."

LLMrefs and referral traffic: LLMrefs represents a distinct category of generative AI search analytics built to track LLM referral traffic, the small but high-converting slice of sessions arriving via AI-generated links. Capturing it requires deliberate instrumentation separate from standard organic analytics.

Nightwatch.io's research also surfaces a finding that reshapes how SaaS teams should think about their measurement stack: Google rankings and LLM citations don't correlate reliably. A brand ranking number one for a keyword may not appear in AI answers for the same query at all. For connecting LLM-referred traffic to downstream pipeline activity, Sona Attribution provides multi-touch revenue attribution that captures these non-standard referral paths.

Which Tools Are Best for Tracking LLM Visibility in 2026?

Dedicated LLM visibility tools are required to track brand mentions, sentiment, and share of voice across AI engines. Tools like Google Search Console have no visibility into what ChatGPT or Perplexity say about your brand.

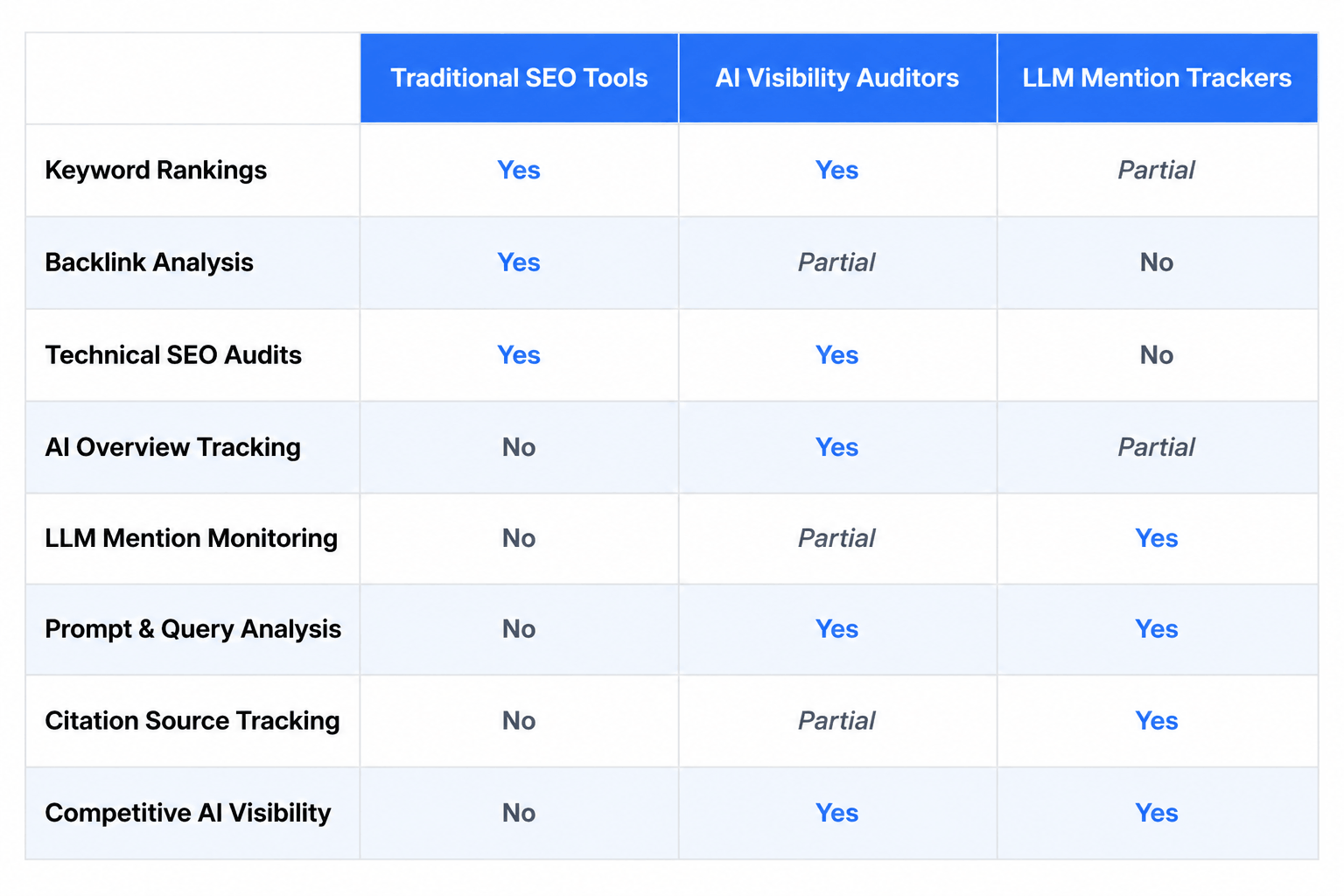

According to AIclicks.io's 2026 roundup of LLM visibility tools, the market has split into two complementary categories: technical AI readiness auditors (which check whether AI engines can crawl and cite your site) and LLM mention trackers (which monitor how often and how favorably your brand appears in AI-generated answers). SEO Hacker's comparative overview confirms this split and notes that most teams need both layers.

Sona AI Visibility covers the technical audit layer: 17 checks across crawlability, schema markup, content structure, and freshness. It's free, requires no account, and returns results in under 30 seconds. According to Sona's own data, 3 in 4 websites are partially or fully invisible to AI engines, and most fixes cost nothing to implement once identified.

Run your free audit at Sona AI Visibility to see your current score across the four categories AI engines use to decide whether to cite your site.

LLM Visibility Tool Categories: What to Look For

CapabilityTraditional SEO ToolsAI Visibility Auditors (e.g., Sona AI Visibility)LLM Mention TrackersTracks Google ranking positionsYesNoNoChecks AI crawlability (GPTBot, llms.txt)NoYesNoMeasures AI Share of VoiceNoNoYesSentiment scoring in AI answersNoNoYesSchema markup validation for AIPartial (generic schema checks)Yes (AI-specific schema)NoCompetitor LLM benchmarkingPartial (ranking comparisons only)NoYesFree tier availableLimited (trial only)Yes, full 17-check auditLimited (prompt caps)Setup timeHours to daysUnder 30 seconds2–4 hoursBest forRanking optimizationTechnical AI readinessOngoing brand monitoring

AI visibility auditors and LLM mention trackers are complementary, not competing. Auditors fix the technical foundation that determines whether AI engines can cite you. Trackers monitor whether they actually do.

How Does LLM Visibility Affect Your SEO Strategy?

LLM visibility doesn't replace SEO. It adds a parallel measurement and optimization layer that traditional SEO strategies don't address, requiring B2B SaaS teams to optimize for AI citation signals alongside ranking signals.

The Query Fan Out concept is central here. This Reddit SaaS community discussion makes the case directly: LLMs internally expand prompts into multiple sub-queries, meaning topical authority across a cluster drives AI citation more than single-keyword optimization. A brand that publishes one highly-ranked post on "CRM pricing" but nothing on integrations, onboarding, or enterprise security will lose LLM visibility to a brand with shallower individual posts but broader topical coverage.

M+C Saatchi Performance's analysis of what actually drives LLM search visibility identifies the technical and content signals that matter most for AI citation. The practical guide to AI and LLM visibility from Backlinko covers the query fan-out testing methodology in detail, including how to probe which sub-queries your brand is and isn't appearing in.

LLM-specific optimization signals SEO tools don't track:

- llms.txt file presence and correct formatting

- GPTBot access confirmed (not blocked in robots.txt)

- dateModified schema on all content pages

- Named authors with bylines and author schema

- FAQPage schema on relevant content

- Third-party authority platform presence (G2, Capterra, industry analyst reports, trade publications)

- H1 to H2 to H3 content hierarchy that AI engines can parse

Third-party platform presence is a major driver of LLM visibility that pure SEO strategy misses. LLMs draw from G2, Capterra, and industry publications when forming answers about software categories. A brand with 200 G2 reviews and coverage in three analyst reports will appear in AI answers for competitive queries even if its own website has technical gaps.

The recommended approach is a dual-track strategy: maintain traditional SEO for discovery volume; build LLM visibility for conversion quality. Sona AI Visibility checks for llms.txt validation, live GPTBot probe, and schema markup as the fastest way to identify which of these signals are missing from your site.

How Do You Set Up LLM Visibility Tracking Step by Step?

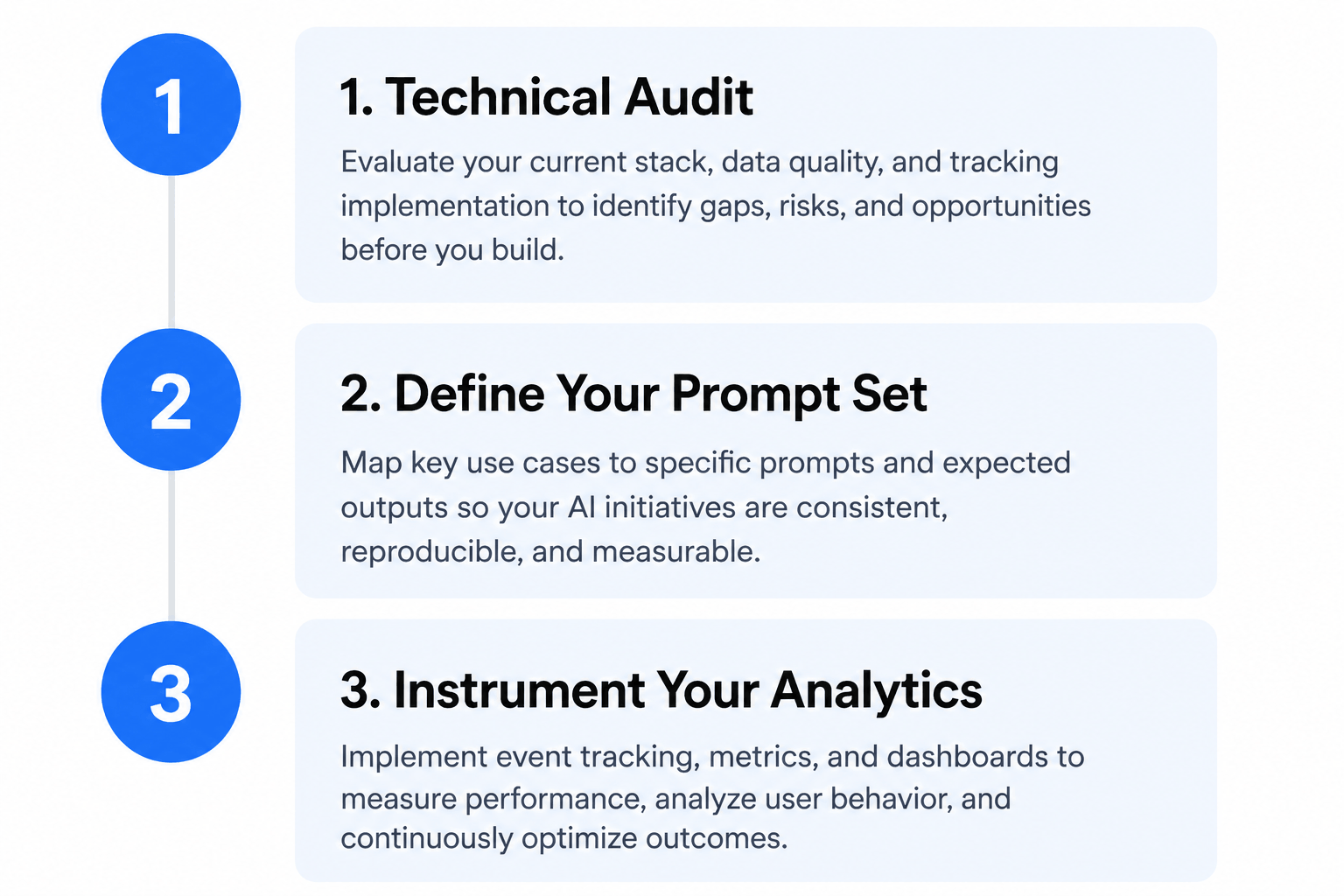

Setting up LLM visibility tracking requires three sequential steps: audit your site's technical AI readiness, establish a prompt set that mirrors how your buyers research your category, and instrument your analytics to capture LLM-referred traffic separately from organic search.

Step 1: Technical Audit

Confirm that AI engines can crawl, read, and cite your site. Check robots.txt for GPTBot blocks. Validate your llms.txt file. Confirm schema markup is present and correct for FAQPage, Article, and Organization. Verify content freshness signals including dateModified in schema and visible "Last updated" timestamps. Check that your content hierarchy follows H1 to H2 to H3 structure that AI engines can parse.

Sona AI Visibility completes this step in under 30 seconds with a free 17-check audit covering crawlability, schema markup, content structure, and freshness. Most fixes cost nothing to implement once identified.

Step 2: Define Your Prompt Set

Identify 10–20 prompts that mirror how your ICP researches your category in ChatGPT or Perplexity. Structure them as buyers actually ask questions:

- "What's the best [category] tool for [specific use case]?"

- "Compare [your brand] vs. [top competitor]"

- "What do [your category] tools cost for a team of [size]?"

- "Which [category] platforms integrate with [common tool in your stack]?"

Run these prompts manually or via a tracking tool weekly across ChatGPT, Perplexity, and Claude. Log whether your brand appears, where it appears, and what sentiment the AI expresses.

Step 3: Instrument Your Analytics

Create a dedicated segment in your analytics platform for traffic arriving from AI referrers: perplexity.ai, chatgpt.com, claude.ai, and gemini.google.com. Track branded search volume as a separate metric. Brief your sales team to ask "How did you first hear about us?" on every discovery call and log AI mentions separately from other referral sources.

Previsible.io's step-by-step LLM visibility tracking guide provides a detailed implementation framework for each of these steps, including how to structure prompt sets and configure analytics segments. The practical Backlinko guide covers the query fan-out testing methodology for identifying which sub-queries your brand is missing.

For teams that want to connect LLM-driven discovery to downstream pipeline activity, Sona Intent Signals tracks buyer research behavior and links early-stage AI-driven discovery to the accounts that eventually convert.

Start with Step 1 in under 30 seconds: run your free technical audit at Sona AI Visibility.

Frequently Asked Questions

What does LLM visibility mean for my brand's online presence?

LLM visibility determines whether your brand appears in the AI-generated answers that ChatGPT, Perplexity, Google AI Overviews, and Claude produce when users ask questions related to your category. Unlike a search ranking, LLM visibility is binary: you're either cited in the answer or you're not. Brands with strong LLM visibility are effectively recommended by AI to buyers who are actively researching purchase decisions, making it one of the highest-intent discovery channels available in 2026.

How do I track and improve my visibility in AI-powered search results?

Start by auditing your site's technical AI readiness: confirm that GPTBot can crawl your pages, that you have an llms.txt file, and that your content uses structured schema markup including FAQPage, Article, and Organization. Then define a prompt set that mirrors how your buyers research your category and run those prompts weekly across ChatGPT, Perplexity, and Claude to track mention rate and sentiment. Sona AI Visibility automates the technical audit step and surfaces the specific fixes most likely to improve AI citability, with results in under 30 seconds at no cost.

Can you explain how LLMs display and rank webpages?

LLMs don't rank webpages the way Google does. They generate text answers by drawing on training data and, in some cases, live web retrieval, selecting content they assess as authoritative, well-structured, and relevant to the user's prompt. Brands improve their chances of being cited by publishing content with clear structure (H1 to H2 to H3 hierarchy), named authors, schema markup, and fresh timestamps. Third-party platform presence on G2, Capterra, and industry publications is also a major driver because LLMs draw from these sources when forming answers about software categories.

What tools are available to measure visibility in large language model search outputs?

The LLM visibility tool landscape in 2026 falls into two categories: technical AI readiness auditors (which check whether AI engines can crawl and cite your site) and LLM mention trackers (which monitor how often and how favorably your brand appears in AI-generated answers). Sona AI Visibility covers the technical audit layer with a free 17-check scan across crawlability, schema markup, content structure, and freshness. For ongoing mention tracking, platforms like LLMrefs provide generative AI search analytics across multiple LLM platforms, tracking referral traffic from AI-generated links as a distinct signal from organic search.

How is LLM visibility changing the SEO landscape?

LLM visibility is creating a parallel optimization discipline alongside traditional SEO, with its own metrics, tools, and optimization tactics. Nightwatch.io's research found that Google rankings and LLM citations don't correlate reliably, meaning a brand can rank number one in Google and still be invisible in AI answers for the same query. B2B SaaS teams that treat LLM visibility as a separate measurement track are better positioned as AI-powered search captures a larger share of buyer research behavior, particularly at the top of the funnel where buyers are shortlisting options before visiting any vendor website.

What is a Query Fan Out and why does it matter for LLM visibility?

A Query Fan Out is the process by which an LLM internally expands a user's prompt into multiple related sub-queries before generating an answer. A prompt like "best CRM for B2B SaaS" might fan out into sub-queries about pricing, integrations, user reviews, and onboarding complexity. LLM visibility requires topical authority across a cluster of related topics, not just optimization for a single keyword. Content depth and breadth across a subject area matter more than exact-match keyword targeting.

What is an LLM visibility score and how is it calculated?

An LLM visibility score aggregates multiple signals including mention rate, mention position, sentiment score, and AI share of voice into a single performance indicator for a brand's presence in AI-generated answers. Some tools also incorporate technical readiness signals (crawlability, schema markup, content freshness) into the score. Sona AI Visibility produces a score and letter grade (A through F) across four technical categories, giving marketers a baseline from which to measure improvement over time.

Does LLM visibility drive real business results or is it just a vanity metric?

LLM visibility drives measurable business outcomes. AI search visitors arrive having already received a curated recommendation, meaning their intent is validated before they reach your site. Everybody Agency's research found that LLM referrals are worth 4.4x more per visit than organic search traffic. These results appear in branded search volume, direct traffic, and sales call attribution rather than in standard organic traffic reports, requiring separate instrumentation to capture accurately.

Last updated: April 2026

.png)

.png)

.png)