What Is an LLM Visibility Tool — and Why Does Your Brand Need One in 2026?

An LLM visibility tool tracks how often, how accurately, and in what context your brand appears in AI-generated answers across large language models like ChatGPT, Perplexity, Google AI Overviews, and Microsoft Copilot.

This is a different problem from traditional SEO. Google rankings tell you where you appear in a list. LLM visibility tells you whether AI engines are writing about your brand at all, and whether what they say is true.

According to Nick Lafferty's 2026 guide to LLM tracking tools, 67% of organizations are already deploying LLMs for customer-facing applications, making unmonitored AI representation a live business risk. Separately, 60% of Google searches now end without a click, which means AI-generated answers have become the first touchpoint for buyers researching your category.

B2B SaaS companies face three distinct failure modes without LLM visibility.

Invisibility: AI engines never cite your brand in relevant answers, even when you're the right solution. Competitors get the mention. You get nothing.

Misrepresentation: AI cites your brand but describes it inaccurately, attributing the wrong use cases, integrations, or positioning. Buyers form incorrect impressions before they ever visit your site.

Hallucination: AI fabricates specific facts about your brand: pricing tiers that don't exist, features you haven't built, integrations you don't support. B2B SaaS is especially vulnerable because product details are complex, frequently updated, and high-stakes for buyers making purchase decisions.

Without a visibility tool, you have no way to know which failure mode is affecting you, or how severely.

What Are the Best LLM Visibility Tools Available in 2026?

The leading LLM visibility tools in 2026 fall into four tiers: enterprise monitoring platforms, agency-focused reporting tools, technical AI readiness auditors, and keyword-based LLM trackers. Each solves a different slice of the visibility problem.

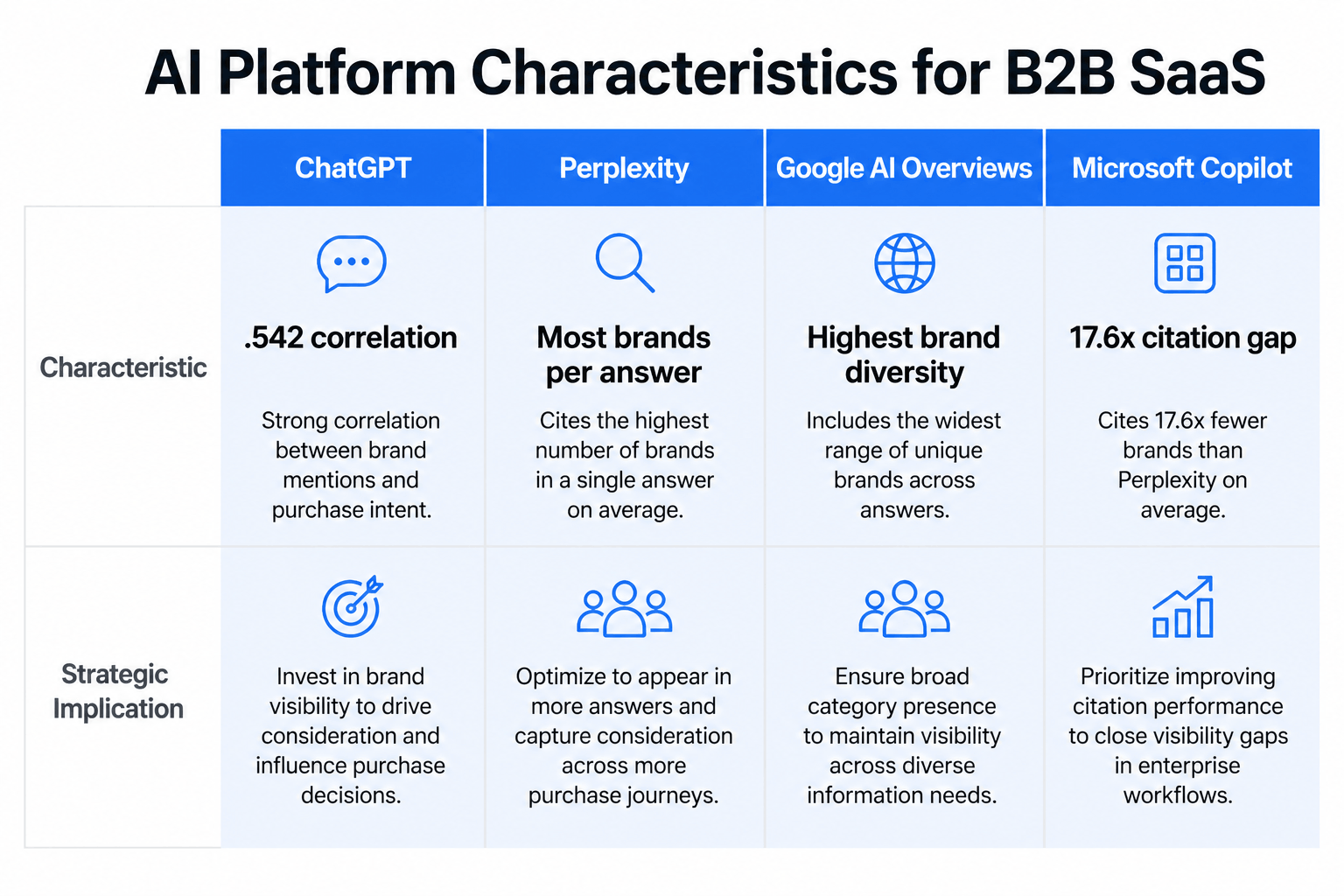

According to Nick Lafferty's analysis, Perplexity mentions the most brands per average answer, while Microsoft Copilot has the starkest citation inequality: the top 10% of brands receive 17.6x more citations than the rest. That concentration risk makes platform-specific tracking essential.

Zapier's 2026 review of AI visibility tools provides detailed feature breakdowns of Profound and Peec AI, and the Traffic Think Tank GEO tool landscape overview maps the broader category for teams evaluating generative engine optimization tools.

Most comparison articles focus on the monitoring tier and skip the foundational question: can AI engines actually read your site? A brand monitoring tool tells you that you're not being cited. A technical readiness audit tells you why.

The enterprise tier (Profound, Semrush) covers the widest range of LLMs with the deepest feature sets. The agency tier (Peec AI) prioritizes client-ready reporting. The keyword tracking tier (LLMrefs) focuses on query-level brand discovery. The technical readiness tier, where Sona AI Visibility sits, addresses the upstream problem that determines whether any monitoring tool will show positive results.

How Do LLM Visibility Tools Detect Hallucinations and Track AI Accuracy?

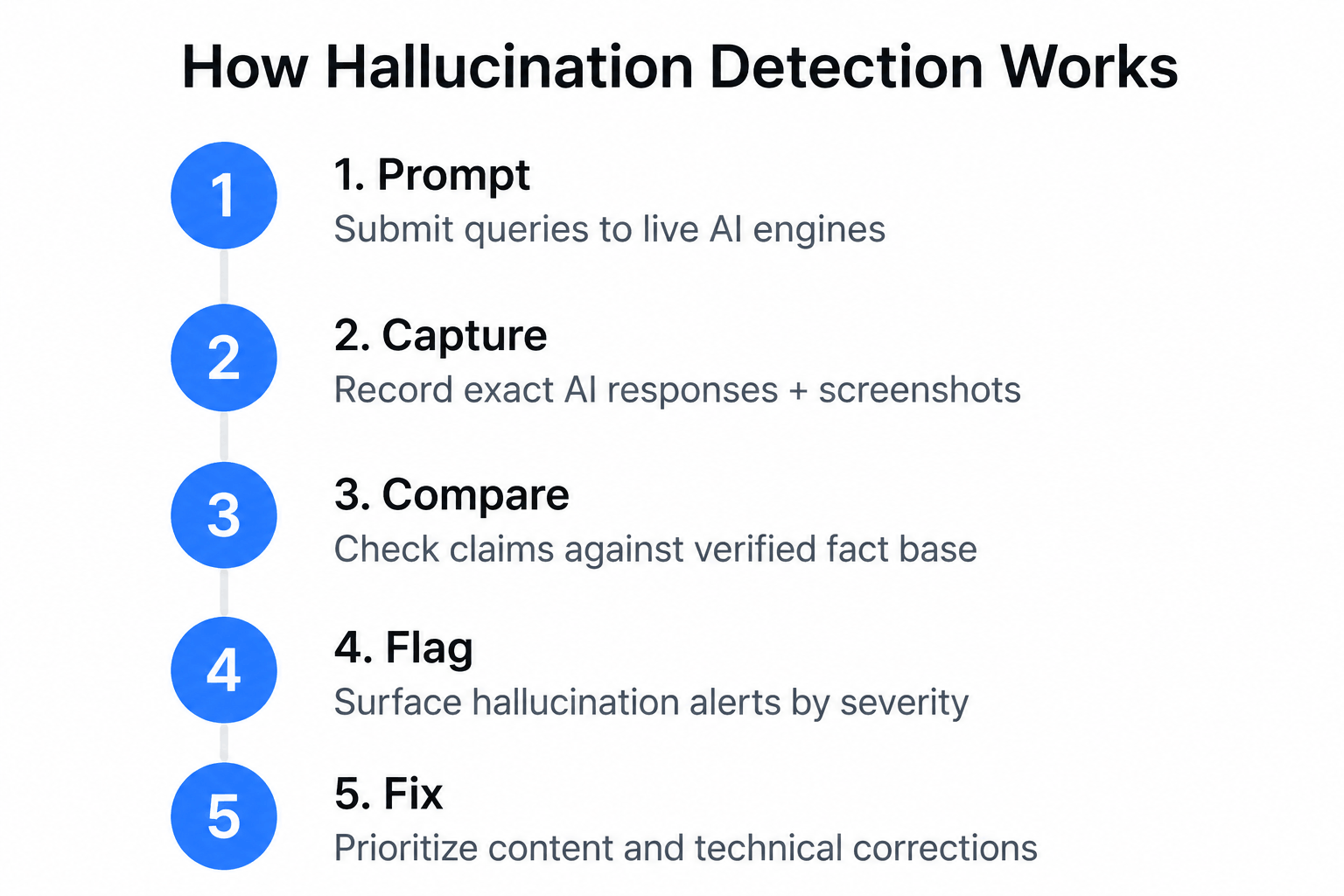

LLM visibility tools detect hallucinations by running controlled prompts against live AI engines, capturing the exact responses, and comparing AI-stated facts against your verified source of truth, including pricing, features, and integrations.

The workflow follows five steps:

- Prompt: The tool submits pre-defined queries about your brand to live AI engines (ChatGPT, Perplexity, Gemini, etc.)

- Capture: It records the exact AI-generated response, including screenshots in advanced platforms like Profound

- Compare: It checks AI-stated claims against your verified fact base (pricing pages, feature documentation, integration lists)

- Flag: It surfaces discrepancies as hallucination alerts, ranked by severity

- Fix: It guides your team toward the content or technical changes most likely to correct the AI's understanding

According to Zapier's 2026 testing of AI visibility tools, only one tool caught all four pricing hallucinations injected into the test scenario, demonstrating a real capability gap across the category. Most tools track whether you appear in AI answers. Far fewer verify what is actually said.

As Yotpo's 2026 analysis of LLM monitoring tools explains, leading platforms run queries on LLM chatbots continuously to track both performance and accuracy, not just citation frequency.

B2B SaaS companies face disproportionate hallucination risk because their products are complex and frequently updated. A pricing change, a deprecated integration, or a renamed feature can persist as incorrect information in AI training data for months. There is also a technical precondition most hallucination discussions ignore: if AI engines can't crawl your site accurately, they fall back on older or lower-quality sources, which increases hallucination frequency. Sona AI Visibility addresses this upstream layer, checking whether GPTBot can access your pages, whether your schema markup is correctly structured, and whether your content freshness signals are visible to AI crawlers.

Which LLM Visibility Metrics and KPIs Actually Matter?

The metrics that matter most are share of voice across AI platforms, citation accuracy rate, prompt coverage breadth, and, for revenue-focused teams, the correlation between AI mentions and organic traffic or pipeline.

The core KPI set:

- Share of Voice (SoV): What percentage of relevant AI answers mention your brand, relative to competitors

- Citation Accuracy Rate: How often AI-stated facts about your brand are correct

- Prompt Coverage: How many relevant queries, by topic, use case, or buyer stage, trigger a brand mention

- Hallucination Rate: How frequently AI states incorrect facts about your product, pricing, or integrations

- AI-to-Pipeline Attribution: Whether AI citations correlate with measurable traffic or conversion events

Platform selection matters because each LLM behaves differently. According to Nick Lafferty's research, ChatGPT shows the highest correlation between brand popularity and citation frequency at .542, meaning established brands have a structural advantage on the platform most B2B buyers use. Perplexity cites the most brands per answer, creating more opportunity for less-established brands. Google AI Overviews shows the highest brand diversity across responses.

Yotpo's coverage of LLM monitoring tools introduces the Unified AI Visibility Score concept, which simplifies multi-platform data into a single KPI for executive-level reporting. Click Insights provides a prompt visibility overview and share-of-voice tracking methodology that maps brand mentions to specific query types, helping teams identify which content gaps are driving citation misses.

Sona AI Visibility scores your site across four categories: Crawlability (52 points), Schema Markup (30 points), Content Structure (20 points), and Freshness (25 points). These scores represent the foundational layer that determines whether brand monitoring tools will show improvement after content changes.

How Can B2B SaaS Teams Use LLM Visibility Tools to Improve AI Search Rankings?

B2B SaaS teams improve their AI search presence by fixing the technical barriers that prevent AI engines from crawling and citing their content, then layering brand monitoring to track whether those fixes translate into more citations.

Phase 1: Technical Readiness

According to Sona AI Visibility platform data, 3 in 4 websites are partially or fully invisible to AI engines, and most fixes cost nothing to implement once identified. The four highest-impact fixes:

- Add or correct your llms.txt file to guide AI reading behavior

- Implement FAQPage, Article, Organization, and Breadcrumb schema markup

- Enforce H1 to H2 to H3 heading hierarchy with named authors on key pages

- Add dateModified timestamps and ensure GPTBot is not blocked in robots.txt

Phase 2: Brand Monitoring

Once technical barriers are removed, monitoring tools can track whether your content is being cited, and by which platforms. The four actions that drive Phase 2 results:

- Set up prompt coverage tracking across ChatGPT, Perplexity, and Google AI Overviews

- Run competitor benchmarking to identify citation gaps by topic cluster

- Monitor hallucination rate weekly for pricing pages and feature documentation

- Connect AI mention data to traffic and pipeline using GA4 integration

According to Zapier's review, Profound's GA4 integration enables teams to connect AI citation frequency directly to revenue attribution, while Semrush's AI Visibility Toolkit shows brand appearance across ChatGPT, Google AI Mode, Gemini, and Perplexity with competitor rankings in a single dashboard. Traffic Think Tank's GEO tool landscape analysis frames this two-phase approach as the standard generative engine optimization workflow: fix crawlability first, then optimize for citation.

Sona AI Visibility runs 17 checks across the four categories above, completes in under 30 seconds, and surfaces exactly which fixes to prioritize.

Are There Free or Affordable LLM Visibility Tools for Small Teams and Agencies?

Yes. Several LLM visibility tools offer free tiers or sub-$50/month entry points, making AI search tracking accessible for startups, small agencies, and teams not ready for enterprise pricing.

Sona AI Visibility completes a full 17-check audit across up to 15 pages in under 30 seconds, with no account required and no cost within the free tier limit of 5 audits per day. LLMrefs offers a freemium keyword-based tracking tier for teams monitoring brand mentions across multiple LLMs without a per-seat subscription. According to Zapier's tool review, Peec AI offers an affordable entry point with Pitch Workspaces for sharing AI visibility reports with agency clients. ZipTie provides simple self-serve brand tracking with no complex setup or sales process.

Practitioners in the r/SaaS community consistently flag the same trade-off: affordable tools show whether you're being cited, but real-time hallucination detection, GA4 revenue attribution, multi-seat collaboration, and API access are reserved for higher-tier plans.

The practical stack for small teams: run Sona AI Visibility first to identify and fix technical barriers, then layer on LLMrefs or Peec AI for ongoing brand monitoring. Fixing technical issues before paying for monitoring means your monitoring data reflects actual brand performance, not crawl failures.

How Do LLM Visibility Tools Integrate With Existing SEO and Content Workflows?

The best LLM visibility tools slot into existing workflows through native integrations with GA4, Zapier, and CRM platforms, adding the AI-specific signal layer that traditional tools like Ahrefs and Semrush weren't originally built to capture.

A site can rank number one on Google and still be completely invisible to AI answer engines. Google rewards backlinks, page authority, and keyword relevance. AI engines reward structured data quality, content freshness, named authorship, and crawlability by AI-specific bots like GPTBot. Traditional SEO tools don't validate llms.txt files, run live GPTBot probes, check FAQPage schema for AI citation readiness, or surface dateModified freshness signals.

According to Nick Lafferty's analysis, Profound's GA4 integration connects AI mention data directly to revenue attribution, enabling teams to correlate AI citation frequency with pipeline impact. Yotpo's review highlights how leading platforms provide unified dashboards and actionable content structure recommendations, reducing the manual work of translating audit findings into team tasks.

The practical workflow for integrating LLM visibility into an existing content and development process:

- Audit: Run Sona AI Visibility to get a baseline score across Crawlability, Schema, Content Structure, and Freshness

- Export: Pull findings into your project management tool (Jira, Linear, Notion) as prioritized tickets

- Assign: Route technical fixes (GPTBot unblocking, schema implementation) to dev; content fixes (H1 hierarchy, named authors, dateModified) to the content team

- Re-audit: Re-run the audit after implementation to verify fixes registered correctly

- Monitor: Layer on a brand monitoring tool (Peec AI, Profound, or Semrush) to track citation changes over time

ZipTie's URL-level filtering (not just domain-level) adds granularity for content teams that need to track which specific pages are being cited, rather than aggregate domain performance.

Frequently Asked Questions

What is an LLM visibility tool?

An LLM visibility tool tracks how and whether your brand appears in AI-generated answers from large language models like ChatGPT, Perplexity, Google AI Overviews, and Microsoft Copilot. It measures citation frequency, share of voice, mention accuracy, and, in advanced platforms, detects hallucinations where AI states incorrect facts about your brand. The category is distinct from traditional SEO rank tracking, which measures position in a list rather than presence and accuracy in a generated answer.

What tools can I use to track my brand's visibility across multiple large language models?

The leading multi-LLM tracking tools in 2026 include Profound (8+ LLMs, enterprise-grade with real-time response capture), Semrush AI Toolkit (ChatGPT, Gemini, Perplexity, Google AI Mode with competitor benchmarking), Peec AI (agency-focused reporting across ChatGPT, Perplexity, and Gemini), and Click Insights (share-of-voice tracking with prompt visibility overview). For technical AI readiness, the foundational layer before brand monitoring, Sona AI Visibility offers a free 17-check audit that identifies why AI engines are unable to crawl or cite your site.

How do LLM visibility tools detect hallucinations?

Hallucination detection works by running controlled prompts against live AI engines, capturing the exact responses, and comparing AI-stated claims against your verified facts. Most tools track whether you appear, not what is said. Tools with active hallucination detection, like Profound's real-time response capture with screenshot verification, are valuable for B2B SaaS companies where pricing tiers, feature lists, and integration claims are frequently misrepresented in AI-generated answers.

Is there a free LLM visibility tool?

Yes. Sona AI Visibility is a free AI visibility audit tool that runs 17 checks across crawlability, schema markup, content structure, and freshness, completing in under 30 seconds with no account required (up to 5 audits per day). LLMrefs also offers a freemium keyword-based tracking tier for teams monitoring brand mentions across multiple LLMs. Most paid tools offer free trials before requiring a subscription.

What features should I look for in an LLM visibility tool?

Evaluate tools across six dimensions: (1) number of LLMs covered, (2) hallucination detection capability and verification method, (3) share-of-voice and competitor benchmarking, (4) technical readiness checks including crawlability, schema validation, and llms.txt, (5) integration with GA4 or your CRM for revenue attribution, and (6) prompt coverage breadth across buyer journey stages. For most B2B SaaS teams, starting with a technical audit before investing in brand monitoring delivers the fastest ROI because it removes the crawl barriers that suppress citation rates.

How do I choose the best LLM visibility tool for my team size and budget?

Match the tool to your primary use case. If you need to fix why AI engines ignore your site, start with a free technical audit using Sona AI Visibility. If you need ongoing brand monitoring across multiple LLMs, Peec AI or ZipTie offer accessible entry points at roughly $29 to $49/month. If you need enterprise-grade hallucination detection, revenue attribution, and multi-seat collaboration, Profound and Semrush are the category leaders. Most teams see the best results by running a free technical audit first, then layering on a monitoring tool once crawlability and schema issues are resolved.

What metrics should I track to measure LLM visibility success?

The core KPIs are: Share of Voice (what percentage of relevant AI answers mention your brand), Citation Accuracy Rate (are AI-stated facts correct), Prompt Coverage (how many relevant queries trigger a mention), Hallucination Rate (how often AI states incorrect facts), and AI-to-pipeline attribution (do AI citations correlate with traffic or conversions). ChatGPT shows the highest correlation between brand popularity and citation frequency at .542, making it the priority platform for most B2B SaaS teams starting their LLM visibility programs.

How do LLM visibility tools differ from traditional SEO tools?

Traditional SEO tools optimize for Google's ranking algorithm, tracking keyword positions, backlinks, and page authority. LLM visibility tools target a different signal set: structured data that AI engines parse (FAQPage, Article, Organization schema), llms.txt files that guide AI reading behavior, content freshness signals via dateModified, named authorship, and GPTBot crawl access. A site can rank number one on Google and still be completely invisible to AI answer engines. The two tool categories are complementary, not interchangeable.

Last updated: April 2026