What actually changes when AI engines answer a query instead of ranking pages?

AI search engines synthesize a single answer from multiple sources and surface citations inline. They do not return a ranked list of ten blue links.

According to Indexly.ai, 60% of Google searches now end without a click. The user reads the synthesized answer and moves on. Your page either gets cited inside that answer or it does not exist.

Three contrasts define the shift:

- Traditional SERPs: Ranked list, user clicks, traffic flows to the winner at position one.

- AI SERPs: Synthesized paragraph, citations appear inline, user never leaves the interface.

- Implication for competitor analysis: The question shifts from "who outranks me?" to "who gets cited instead of me?" Running an AI visibility audit surfaces that gap faster than any rank tracker.

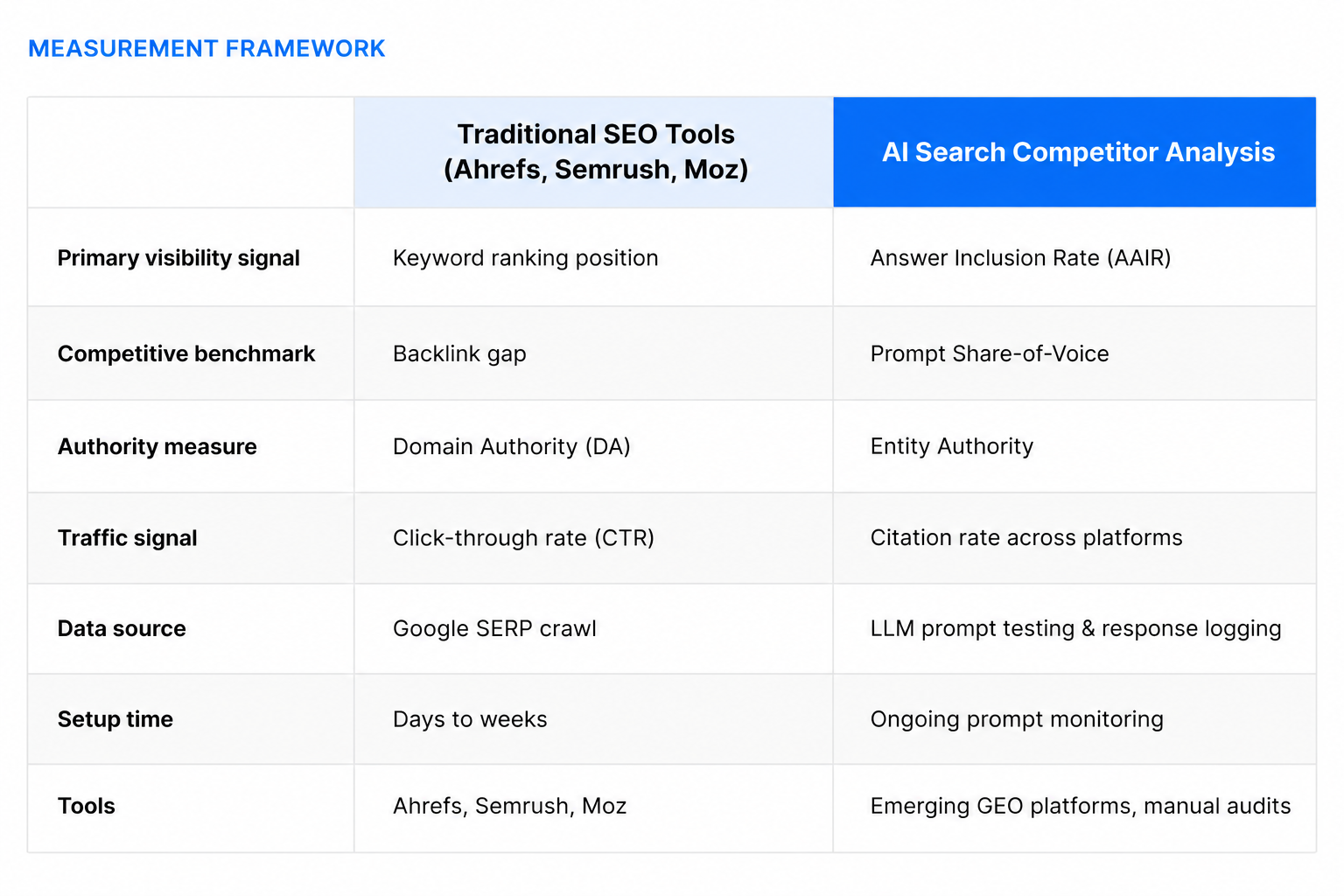

Why do Ahrefs, Semrush, and Moz structurally miss LLM visibility?

These tools were built to crawl SERPs and index backlink graphs. Neither data source tells you how an LLM selects, weights, or cites content inside a generated answer.

You cannot query Ahrefs with "what does ChatGPT say when someone asks about revenue attribution tools?" As Indexly.ai reports, traditional SEO tools excel at SERP positions and backlink profiles but cannot measure citation frequency or sentiment in AI-generated responses. They are not bad tools. They are the wrong tools for this measurement problem.

For the full strategic overview, see our guide on the best competitor analysis tools for AI search and LLMs.

What is Answer Inclusion Rate, and how does it replace keyword rankings?

Answer Inclusion Rate (AAIR) measures how frequently your brand or content appears inside AI-generated answers for a defined set of prompts. It is the closest equivalent to ranking position in an LLM environment.

Geneo.app names AAIR and prompt-specific retrieval rate as the primary 2025 KPIs for AI visibility competitive benchmarking, replacing traditional ranking and CTR metrics. There is no position one through ten in a synthesized answer. You are either in or out.

How to calculate your baseline manually:

- Build a prompt set of 20 to 50 category queries covering your core use cases.

- Run each prompt across ChatGPT, Perplexity, and Claude. Log every result.

- Record which prompts include your brand in the answer.

- Divide brand-included prompts by total prompts tested and multiply by 100.

- Repeat for each competitor. The gap between their AAIR and yours is your competitive deficit.

- Track each platform separately — a brand cited heavily in Perplexity may be absent in ChatGPT.

Audit your current AI answer inclusion before benchmarking competitors. You need your own baseline first.

How do you measure Share-of-Voice in AI search, and why does it matter more than backlink gaps?

Prompt Share-of-Voice measures what percentage of AI-generated answers in your category mention your brand versus competitors. It replaces backlink gap analysis as the primary competitive benchmark.

A backlink gap tells you who has more links. It does not tell you who gets recommended when a buyer types "what's the best revenue attribution tool for B2B?" into Perplexity. Indexly.ai measures AI Share-of-Voice via citation frequency and recommendation prominence across generative platforms, a metric backlink analysis cannot approximate.

The calculation: divide your brand's total mentions across a defined prompt set by total brand mentions for all competitors across the same prompts, then multiply by 100. Four things to track:

- Informational prompts ("what is revenue attribution?") versus evaluative prompts ("what's the best revenue attribution tool for B2B?"). SOV differs between the two.

- Platform-level SOV. Your share in ChatGPT and your share in Perplexity will diverge.

- Intent cluster gaps. Prompts where a competitor dominates and you do not reveal topics where their entity authority exceeds yours.

- Trend over time. Monthly SOV snapshots show whether content investments are shifting the competitive balance.

Pairing SOV data with intent signals that surface in-market accounts connects AI visibility to pipeline, not just brand metrics.

What is Citation Rate, and how do you track it across ChatGPT, Perplexity, and Claude?

Citation rate measures how frequently an AI engine names and links your brand as a source inside a generated answer. It signals trust to the reader, not to a crawler.

Indexly.ai tracks citation rate as frequency and sentiment of brand mentions across ChatGPT, Perplexity, and Gemini, naming it a core competitive KPI that traditional SEO tools do not measure. Distinguish citation from mention: a citation names your brand as a source with a link (common in Perplexity), while a mention includes your brand name in synthesized prose without attribution (common in ChatGPT's base model). Track frequency and sentiment for each.

Platform-by-platform tracking notes:

- Perplexity cites sources explicitly with links. Log every citation and the surrounding sentence for sentiment.

- ChatGPT with browsing cites sources. The base model does not. Track both modes separately.

- Claude synthesizes without explicit citation in most responses. Mention rate and sentiment matter more than citation count here.

Sentiment is not optional. A citation that reads "some users report reliability issues with X" is not equivalent to "X is the category leader for B2B teams." Orbit Media's analysis of traditional versus AI search confirms that citation prominence and framing determine whether a mention drives brand trust or undermines it. According to Sona AI Visibility data, 3 in 4 websites are partially or fully invisible to AI engines, meaning most brands start with a citation rate near zero.

How does Entity Authority differ from Domain Authority, and why do LLMs care about it?

Entity Authority measures how clearly and consistently an AI engine can extract factual, structured information about your brand. Domain Authority measures how many sites link to you, which LLMs do not use as a ranking signal.

Geneo.app's analysis of Google's AI Overview documentation names extractable facts, chunk clarity, and content provenance as selection signals. None of those map to Domain Authority. Entity Authority asks a different question: does the LLM know your brand as a distinct entity with clear attributes, including category, use case, differentiators, and customer type?

Three contrasts make the difference concrete:

- DA signal: Number of referring domains pointing at your site.

- Entity Authority signal: Consistency of factual claims about your brand across your site, press coverage, structured data, and third-party sources.

- Competitive gap test: Query an LLM directly. Ask "What do you know about [competitor]?" then ask the same about your brand. The richness and accuracy of each response reveals the entity authority gap.

Nightwatch.io confirms that semantic relationships and entity clarity outweigh backlink volume as trust signals in AI environments. Building entity authority requires consistent schema.org Organization markup, factual press coverage with named claims, Wikidata presence, and structured product and About pages. Connecting those brand signals to revenue attribution closes the loop between visibility investment and pipeline impact.

What does an effective AI search competitor analysis workflow look like for B2B SaaS marketers?

Start with a prompt library, not a keyword list. Define the 30 to 50 questions your buyers ask at each funnel stage, then systematically test which competitors appear in the answers.

Indexly.ai reports that AI search citations appear in 2 to 6 months versus traditional SEO's 3 to 12 months for ranking gains, making AI competitor analysis faster to act on once you have the right workflow. Six steps:

- Build your prompt library. Map 30 to 50 prompts across awareness ("what is revenue attribution?"), consideration ("best revenue attribution tools for B2B"), and decision ("Sona vs. Dreamdata") stages. Cover informational and evaluative intent separately.

- Run prompts across platforms. Test ChatGPT, Perplexity, Claude, and Google AI Overviews. Log results in a shared tracker with columns for brand mentioned, position in answer, and sentiment.

- Score AAIR and SOV. For each prompt, record which brands appear, in what context, and with what framing. Calculate each brand's inclusion rate and share across the full prompt set.

- Audit your entity footprint. Run your brand name through each LLM and compare the richness of the response to your top competitors. Gaps in factual detail reveal entity authority weaknesses.

- Identify content gaps. Prompts where competitors appear and you do not reveal topics where your content is absent or too thin to be extracted.

- Run a technical AI visibility audit. Use Sona AI Visibility to check crawlability, schema markup, content structure, and freshness. Fix technical gaps before publishing more content.

For tools that automate this workflow, the pillar guide covers the full competitive landscape of GEO platforms available today.

Frequently asked questions

Can I use Semrush or Ahrefs for AI search competitor analysis?

Not directly. These tools track Google SERP rankings and backlink profiles. They do not query LLMs, track citation rates, or measure prompt Share-of-Voice. Use them for traditional SEO alongside a separate AI visibility workflow. The two measurement systems address different questions and require different data sources.

What is the fastest way to benchmark my AI search visibility against competitors?

Build a 20-prompt test set covering your core category queries. Run each prompt in ChatGPT, Perplexity, and Claude. Log which competitors appear in answers and calculate each brand's inclusion rate across the prompt set. That gives you a baseline AAIR benchmark you can repeat monthly to track movement.

Does improving my traditional SEO automatically improve my AI search visibility?

Partially. Structured content, schema markup, and authoritative sources help both. But AI search also requires entity clarity, prompt-specific relevance, and content formatted for extraction. Keyword rankings do not measure any of those factors, so traditional SEO gains and AI visibility gains do not move in lockstep.

How often should I run AI search competitor analysis?

Monthly at minimum. LLM training and retrieval layers update frequently. A competitor absent from AI answers in January may dominate by March if they publish structured, citable content at scale.

What is the difference between a citation and a mention in AI search?

A citation names your brand as a source with a link, which is common in Perplexity. A mention includes your brand name in synthesized prose without attribution, which is common in ChatGPT's base model. Track frequency and sentiment for each type separately, since the competitive implications differ.

Is entity authority something I can build, or is it determined by LLM training data?

You can build it. Consistent schema.org Organization markup, factual press coverage with named claims, Wikidata entries, and clearly structured product and About pages all increase the probability that LLMs extract accurate, positive entity information about your brand. Retrieval-augmented systems pull from live sources, so structured content published today can shift your entity footprint within weeks.

Last updated: April 2026