Why do traditional SEO tools miss competitor visibility in AI search?

Traditional SEO tools track where pages rank in blue-link results. They were not built to track whether an LLM cites your competitor when a buyer asks a question.

A competitor can rank number one organically and never appear in a ChatGPT or Perplexity answer. Conversely, a competitor with mediocre organic rankings can dominate AI-generated answers because their content is structured, authoritative, and schema-rich. Rank and citation are two different signals. Most tools only measure one.

This matters more in B2B than anywhere else. B2B buyers run longer research cycles and increasingly use AI assistants to shortlist vendors before visiting a website. According to data from Sona AI Visibility, 60% of Google searches now end without a click, meaning AI-generated answers intercept buyers before any ranked page gets seen.

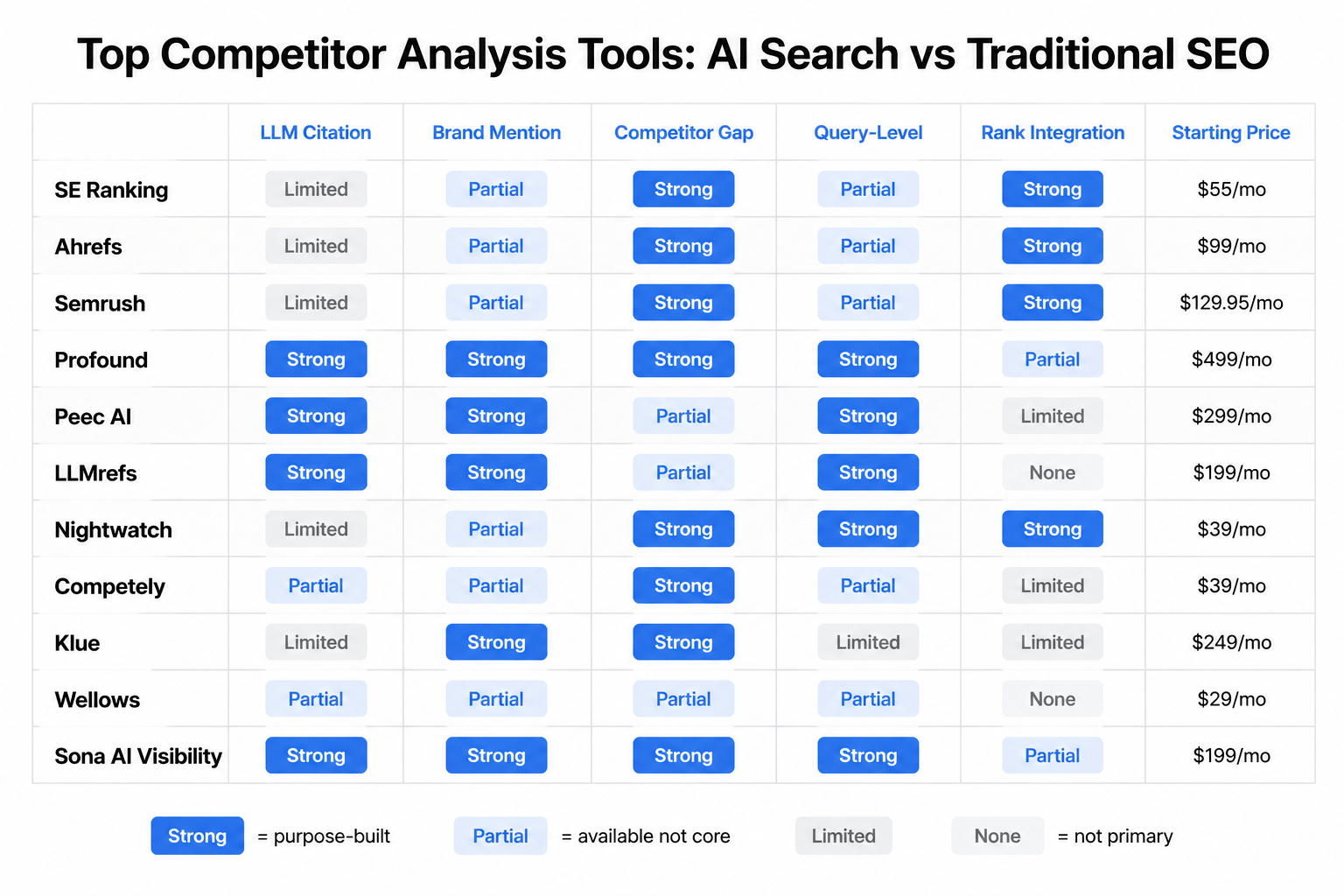

Five dimensions now separate useful competitor analysis from incomplete competitor analysis: LLM citation tracking, brand mention monitoring in AI engines, competitor gap analysis, query-level insights, and traditional rank tracking integration. An AI visibility audit gives you a baseline across all five before you start benchmarking competitors.

Semrush and Ahrefs are mature, trusted platforms. Neither natively tracks query-level LLM citations at the prompt level. Hybrid tools close most of the gap. Pure LLM-native tools close the rest.

What are the five dimensions that separate AI search tools from traditional ones?

Every tool in this category claims to cover "AI search." Audits run across 1,000+ sites by Sona AI Visibility found 3 in 4 websites are partially or fully invisible to AI engines. Knowing which dimension your competitor exploits tells you exactly where to act.

- LLM Citation Tracking. Does the tool tell you which queries trigger your competitor's brand inside ChatGPT, Perplexity, or Claude? Not just impressions, but actual citation events tied to specific prompts. This is the core capability traditional SEO tools lack natively.

- Brand Mention Monitoring in AI Engines. Does it surface when a competitor is named in AI-generated answers, not just on web pages? Sentiment matters here too. A competitor mentioned negatively in AI answers is a different competitive signal than one cited as a recommended solution.

- Competitor Gap Analysis. Does it identify which topics, queries, or knowledge areas your competitors own in AI results that you do not? Gap analysis in LLM environments is about which questions the AI answers using your competitor's content instead of yours.

- Query-Level Insights. Can you see the specific prompts or question types that surface competitors, not just keyword clusters? This granularity is what lets you identify which accounts are researching you and what they are actually asking.

- Traditional Rank Tracking Integration. Does it connect LLM visibility data to organic rank data so you can prioritize where to act first? A competitor ranking third organically but cited in 70% of AI answers is a bigger threat than their rank suggests.

How do the top tools compare across all five dimensions?

No tool scores perfectly across all five dimensions. AI competitor analysis tools range from free (Google Alerts) to $3,000+ per month for enterprise platforms, with meaningful capability differences at every price tier, as documented by Visualping's 2026 tool analysis.

Strong = purpose-built capability. Partial = available but not core. Limited/None = not a primary feature.

Established SEO platforms (SE Ranking, Ahrefs, Semrush) score well on rank tracking integration but show gaps in query-level LLM citation depth. LLM-native tools (Profound, Peec AI, Wellows) score well on citation and query-level data but lack organic rank infrastructure. The strongest single-tool choice for teams that need both layers is SE Ranking. For teams that need a free starting point, run a free AI visibility audit on your own site before committing to any paid tool.

Which tools are best for agencies versus in-house teams?

Agencies need multi-client dashboards, white-label reporting, and breadth across LLM engines. In-house teams need depth on a single competitive set and direct integration with their existing stack.

For agencies:

- SE Ranking's multi-client workspace structure handles LLM and organic data in one report, with white-label options that hold up in client presentations.

- Nightwatch offers an entry point at approximately $39/month for agencies managing rank tracking at scale, with LLM features being added incrementally.

- Wellows is built for multi-client LLM monitoring, with prompt-level citation data that agencies can use to show clients exactly where competitors appear in AI answers.

- Semrush's Traffic and Market Toolkit covers competitive landscape analysis across multiple clients at scale, making it the default choice for agencies already inside the Semrush ecosystem.

For in-house teams:

- Ahrefs Brand Radar tracks brand citations across ChatGPT, Perplexity, and Gemini with competitive benchmarking. It is the strongest option for in-house SEO leads who already use Ahrefs for organic research.

- Peec AI is purpose-built for LLM citation tracking without the overhead of a full SEO suite. For in-house teams whose primary question is "where does our competitor appear in AI answers," Peec AI answers it faster than any established platform.

- Competely delivers fast qualitative gap analysis for in-house teams that need competitive snapshots without analyst headcount. It does not replace deep citation tracking, but it surfaces positioning gaps in minutes.

- Klue is built for sales and product teams that need battlecards and SWOT analysis, not keyword data. According to Klue's competitive intelligence research, AI-powered competitive intelligence from their platform increases win rates by 28% with 3x less headcount required for deal-specific analysis.

Most in-house teams at B2B companies with under 200 employees do not need enterprise pricing. SE Ranking at $119/month or Peec AI's freemium tier covers the core LLM competitor tracking workflow. The decision to score accounts by ICP fit alongside competitor tracking is where revenue attribution platforms add a layer these tools do not.

How do emerging LLM-native tools compare to established SEO platforms?

Established platforms like Semrush and Ahrefs retrofitted LLM features onto SEO foundations. Emerging tools like Profound, LLMrefs, and Peec AI were built from the ground up for generative engine visibility. That architectural difference shows up in the data.

Established platforms (Semrush, Ahrefs, SE Ranking):

These tools carry years of historical data, deep keyword and backlink infrastructure, and the institutional trust of SEO teams. Their LLM features are add-ons, not core architecture, which means query-level citation data is still limited compared to purpose-built tools. Semrush Copilot gives AI-powered competitor recommendations and growth tracking but does not natively track ChatGPT citations at the prompt level. SE Ranking is the strongest hybrid. As documented by SEO Hacker's 2025 LLM visibility tool comparison, Ahrefs Brand Radar enables direct AI-versus-organic comparison in market landscape mapping, surfacing where competitors gain LLM citations without organic rank gains.

LLM-native tools (Profound, Peec AI, LLMrefs, Wellows):

These tools interrogate generative engines directly, track prompt-level citations, and surface sentiment and source attribution. Profound leads on GEO citation analysis and sentiment scoring, making it the strongest option for product and content teams that need to understand why an LLM cites a competitor, not just that it does. LLMrefs and Peec AI are leaner, faster to deploy, and better suited to teams that do not need full SEO suites. Their weakness is the absence of historical organic data, backlink analysis, and rank tracking.

When to use each:

- Use established platforms when you need LLM data alongside organic, paid, and backlink data in one workflow.

- Use LLM-native tools when your primary question is "which AI engine cites my competitor and why."

- Use both when you are building a full competitive picture across traditional and generative search.

Tools like Ahrefs and Profound use repeated querying, historical benchmarking, and sentiment averaging to produce stable trend data. Single-query snapshots are unreliable; aggregated query data over time is not. Pairing that data with first-party intent signals gives you a picture of both what AI says about your competitors and which accounts are actively researching them.

What should product teams and SEO teams each prioritize in a tool?

Product teams need to know which competitors own specific knowledge areas in AI answers. SEO teams need to know which queries to target to displace those competitors. The right tool depends entirely on which question you are trying to answer.

Product teams:

The primary need is competitor gap analysis and brand mention monitoring in AI engines. The best tools for this use case are Klue (battlecards, SWOT analysis, and Sparks AI for synthesizing a quarter's market news into structured intelligence), Profound (GEO sentiment and source attribution), and Competely (fast qualitative AI reports that do not require analyst time to interpret). According to Figma's AI competitor analysis resource library, Crayon monitors over 100 data types for real-time competitor shifts, making it the strongest option for product teams tracking feature launches and positioning changes across AI-generated content. Rank tracking, backlink data, and keyword volume are SEO metrics and should be ignored in product team tool selection.

SEO teams:

The primary need is query-level insights and rank tracking integration with LLM citation data. The best tools here are SE Ranking (strongest hybrid), Ahrefs (Brand Radar plus organic), Nightwatch (rank-focused with LLM additions), and Semrush (full-stack with Copilot). The metric SEO teams should watch most closely is the gap between organic rank and LLM citation rate. A competitor ranking third organically but cited in 70% of AI answers is a bigger competitive threat than their rank suggests.

If your team owns revenue attribution and demand generation, you need both layers. Start with a free AI visibility audit to benchmark your own site, then layer in a competitor tracking tool that matches your primary use case.

How do you build a competitor analysis workflow that covers both AI search and traditional SEO?

The most effective workflow runs a three-layer stack: an AI visibility audit layer, a citation monitoring layer, and a traditional rank-and-backlink layer, each feeding into a single competitive brief.

Step 1: Audit your own AI visibility first. Run a free audit at Sona AI Visibility to score your crawlability, schema markup, content structure, and freshness before pulling competitor data. The tool runs 17 checks across up to 15 pages in approximately 30 seconds and surfaces the gaps that make your site invisible to AI engines.

Step 2: Identify which queries surface competitors in AI engines. Use Peec AI, LLMrefs, or Profound to run the 10 to 20 queries your buyers ask most. Note which competitors appear, how often, and in what context. This is your competitive citation map. Traditional SEO tools cannot generate it on their own.

Step 3: Map competitor citation sources. Most LLM citations trace back to a small number of high-authority pages. Use Ahrefs or SE Ranking to identify which pages your competitors rank on that also generate AI citations. These are your displacement targets, as detailed in GrowthOS's analysis of AI competitor tools.

Step 4: Run a gap analysis on topics you do not own. Use Competely or Profound to identify knowledge areas where competitors appear in AI answers but you do not. The Marketveep competitive analysis guide recommends prioritizing gaps where competitor citations are consistent across multiple AI engines, since those represent entrenched positioning that requires sustained content investment to displace.

Step 5: Set a monitoring cadence, not a one-time audit. LLM citation patterns shift as models update, competitors publish new content, and buyer query patterns evolve. Set a monthly review cadence using your chosen citation monitoring tool. Flag any competitor that gains more than 10 percentage points in citation rate month-over-month as a signal warranting an immediate content response.

Frequently asked questions

What is the difference between LLM citation tracking and traditional rank tracking?

Traditional rank tracking measures where a page appears in Google's blue-link results for a given keyword. LLM citation tracking measures whether an AI engine like ChatGPT, Perplexity, or Claude references a brand or page when answering a specific question. A page can rank first organically and never appear in AI-generated answers. Tools like Profound, Peec AI, and Ahrefs Brand Radar track citations specifically, while traditional tools like Semrush and Nightwatch track organic rank. The two metrics are complementary, not interchangeable.

Which tool is best for a small in-house B2B marketing team on a limited budget?

SE Ranking at approximately $119/month covers both LLM citation tracking and organic rank data in one platform, making it the strongest value option for in-house teams. Peec AI's freemium tier works for teams whose only question is LLM citation frequency. Competely's freemium tier handles quick qualitative gap analysis. Starting with a free audit at Sona AI Visibility gives any team a baseline before committing budget to a paid tool.

Can I rely on a single tool to cover all five dimensions?

No tool currently scores at full strength across all five dimensions. SE Ranking comes closest for teams that need a hybrid SEO-plus-LLM solution. Profound leads on LLM-native capabilities but lacks rank tracking integration. The practical answer for most teams is a two-tool stack: one established SEO platform for rank and backlink data, and one LLM-native tool for citation and query-level insights.

How often do LLM citation patterns change?

Citation patterns shift with every major model update, every significant piece of new content a competitor publishes, and every change in how buyers phrase their questions. Monthly monitoring is the minimum cadence for competitive markets. Teams in fast-moving categories should run weekly citation checks on their top 10 to 20 priority queries. Tools like Wellows and Profound support automated monitoring alerts that flag changes without requiring manual query runs.

Is Semrush sufficient for tracking competitor visibility in AI search?

Semrush covers competitor gap analysis, brand mention monitoring, and rank tracking at a strong level. Where it falls short is native query-level LLM citation tracking at the prompt level. For teams already inside the Semrush ecosystem, pairing it with Peec AI or LLMrefs for citation-specific data closes that gap without requiring a full platform switch.

What makes Klue different from SEO-focused competitor tools?

Klue is built for sales and product teams, not SEO teams. It surfaces competitive intelligence in the form of battlecards, SWOT analysis, and market news synthesis via its Sparks AI feature. It does not track organic rank or LLM citations as primary capabilities. According to Klue's competitive intelligence research, AI-powered competitive intelligence from their platform increases win rates by 28% with 3x less headcount required for deal-specific analysis.

How does Sona AI Visibility fit into a competitor analysis workflow?

Sona AI Visibility is the starting point, not the full workflow. It runs 17 checks across crawlability, schema markup, content structure, and freshness, giving you a scored baseline for your own site before you benchmark competitors. It also surfaces competitor gap data and query-level insights. For B2B marketing teams that need to connect visibility data to revenue attribution and account-level intent, it integrates with the broader Sona platform. The audit is free and takes under a minute to run.

Do these tools work for industries outside technology?

Yes. LLM citation patterns exist across every industry where buyers use AI assistants for research, including professional services, manufacturing, financial services, and healthcare. The five dimensions in this article apply regardless of industry. The gap between organic rank and LLM citation rate is a universal competitive signal, not a technology-sector phenomenon.

Last updated: April 2026